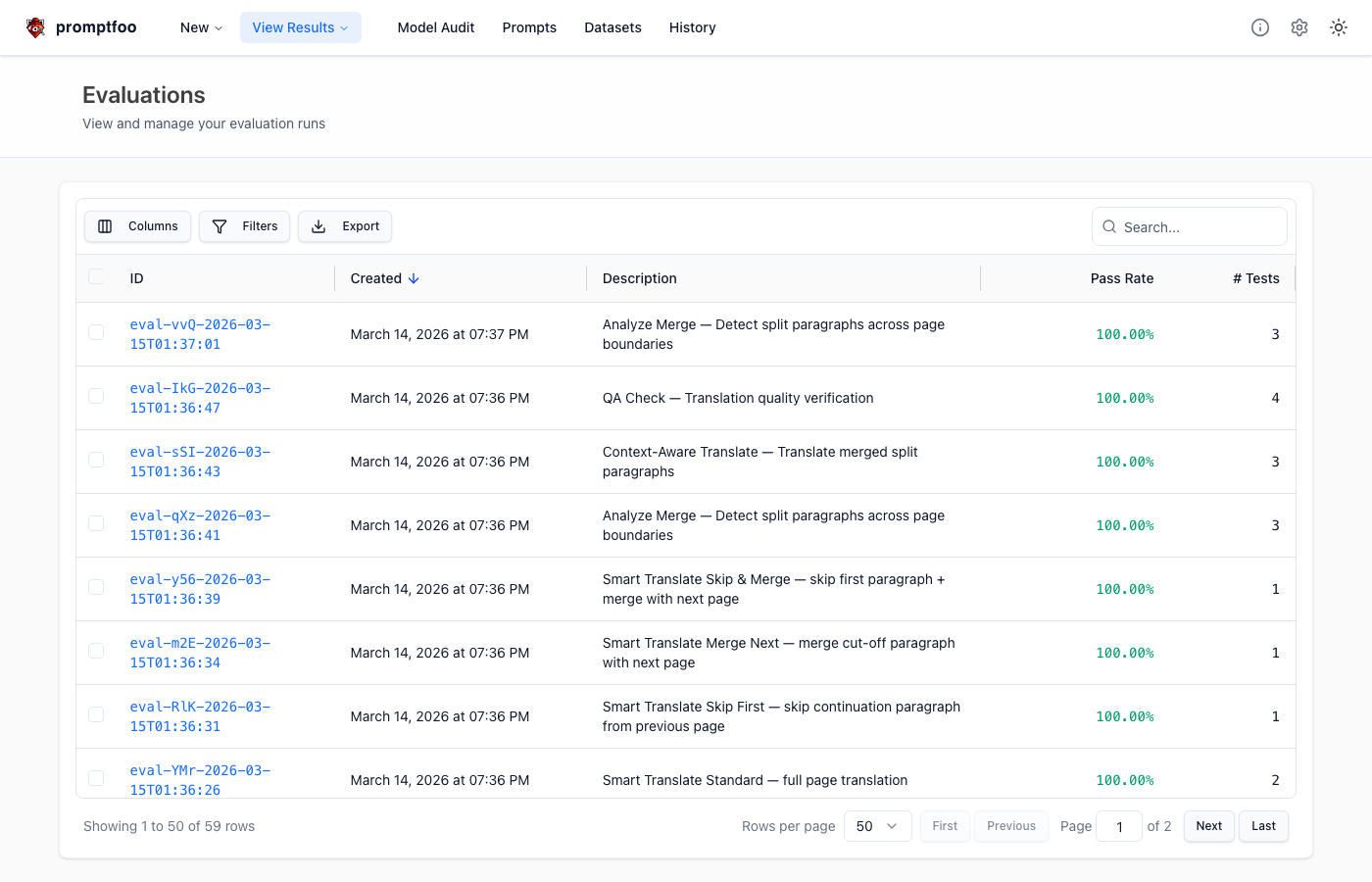

This is the sequel to Part 1: Promptfoo — Jest + Security Scanner for LLM Apps. Part 1 covered what Promptfoo is and how to set it up. This post covers how I used TDD-style testing to catch real prompt bugs in a Vision model workflow.

The Problem: Vision Model Ignores Instructions

Translator Pro's smart-translate workflow has a special case. When a sentence is cut at a PDF page boundary, it provides the next page image as reference and should only grab the continuation of the cut-off sentence.

The problem: when you give a Vision model (Gemini) two images, it completely ignores the text instruction "only grab the first paragraph" and translates the entire second page.

Original prompt:

INCLUDE the first paragraph from the next page image — it is a continuation

of the last paragraph on the current page. Merge them into one coherent

paragraph in the translationThe model translated everything on the next page — dropout regularization, transfer learning, etc. No matter how strongly you say "ignore" in text, the visual information was stronger.

Red: Write Failing Tests First

TDD step one. Create assertions that objectively detect the problem.

assert:

# Content after the continuation should NOT be included

- type: javascript

value: "!output.includes('드롭아웃') && !output.includes('dropout')"

- type: javascript

value: "!output.includes('전이 학습') && !output.includes('Transfer Learning')"Run promptfoo eval.

Results: ✓ 0 passed, ✗ 1 failedThe test failed as expected. This moment was satisfying. The prompt problem was proven by a red test, not a gut feeling.

Green: Label the Images with Roles

Strengthening text instructions alone wasn't enough. The key insight was assigning roles to the images themselves through structural changes.

Before:

{{pageImage}}

{{nextPageImage}}

Translate the document in the image...After:

## Target Page (TRANSLATE THIS)

{{pageImage}}

## Reference Page (DO NOT TRANSLATE — use only to complete a cut-off sentence)

{{nextPageImage}}

Translate ONLY the Target Page...Simple ML textbook tests passed.

Results: ✓ 1 passed, ✗ 0 failedBut it wasn't over yet.

Complex Real Pages Fail Again

Added more test cases. A Redis manual page — 2 tables, diagrams, code blocks. Visually complex.

Results: ✓ 1 passed, ✗ 1 failedRed again. Simple textbook pages passed, but visually complex pages still pulled in second page content.

Refactor: Conditional Prompts Don't Work

Initially tried injecting conditions via {{boundarySection}} in a single prompt. Different instructions for different situations.

This caused instruction conflicts:

- "Translate ALL content" vs "SKIP the first paragraph" clash

- "Reference Page (DO NOT TRANSLATE)" label showing even when there's no image

- Adding a Self-Check section accidentally deleted tables from the Target Page

When the cases are clear-cut, split the prompts.

Analyzed 4 distinct cases and separated into independent prompt files:

| Prompt | Images | Behavior |

|---|---|---|

smart-translate-standard | 1 | Full translation |

smart-translate-skip-first | 1 | Skip first paragraph |

smart-translate-merge-next | 2 | Merge with next page |

smart-translate-skip-and-merge | 2 | Skip + merge |

Each prompt does only its job — instruction conflicts eliminated at the source.

Results: ✓ All passingGreen. But one more hurdle remained.

Plot Twist: Eval Passes but App Fails

All promptfoo eval tests passed. But running the actual app still translated the entire second page.

What was different?

Root Cause: Image Delivery Order

In promptfoo eval, images go where the placeholder is in the prompt file. The model sees:

[text] "## Target Page (TRANSLATE THIS)"

[image] page 8 ← Target

[text] "## Reference Page (DO NOT TRANSLATE)"

[image] page 9 ← Reference

[text] "Translate ONLY the Target Page..."But the app's parsePromptFile was stripping placeholders, and graph.ts was appending images at the very end:

[text] "## Target Page... ## Reference Page... Translate ONLY..."

[image] page 8 ← which is Target? Can't tell

[image] page 9Labels and images were separated, so the model couldn't associate them.

Fix: Image Interleaving

Added userSegments (text/image splitting) to parsePromptFile, and modified graph.ts to interleave text-image-text-image-text:

[text] "## Target Page (TRANSLATE THIS)"

[image] page 8 ← Target

[text] "## Reference Page (DO NOT TRANSLATE)"

[image] page 9 ← Reference

[text] "Translate ONLY the Target Page..."App working correctly confirmed.

Key Lessons

Vision Model Prompt Characteristics

- Two or more images = model tries to process everything — text saying "ignore" doesn't work well

- Labeling images with roles is effective — structural separation like "Target Page" vs "Reference Page"

- Structural separation > text instruction strengthening — a heading before an image is more powerful than "IGNORE everything else"

- Image position matters — if labels and images are separated, the model can't make the connection. Always place the image immediately after its label

Conditional Prompts Fail

Injecting conditions into a single prompt causes instruction conflicts, unnecessary labels create confusion, and workarounds have side effects. When cases are clear-cut, split the prompts.

Eval ≠ App Behavior

- Eval passing doesn't guarantee app works — image delivery mechanisms differ

- Use

.prompt.jsonas single source of truth for both TS code and promptfoo eval - Segment splitting in prompt files ensures both environments behave identically

Assertion Writing Tips

- Keywords that exist on both pages of a fixture will cause false positives (e.g.,

redis-cliappears on both page 8 and page 9) - Assertions should verify with content unique to the specific page

The Prompt TDD Workflow

This cycle is identical to TDD's red-green-refactor:

- Red — Write failing tests first (with deterministic assertions)

- Confirm Red — Prompt problem objectively verified

- Green — Fix the prompt to pass

- Refactor — Improve prompt structure (splitting, labeling, etc.)

- App verification — Eval passing ≠ app working, always verify in the actual app

Wrapping Up

"Prompt engineering" often conjures images of tweaking prompts by intuition. With promptfoo eval, you can turn this into an engineering process. Write a failing test, modify the prompt to pass it, verify no regressions. Same as code TDD.

Especially with Vision models where behavior is hard to predict, manually checking "did it work or not" has limits. With automated assertions, you can modify prompts with confidence.